Worked in Demo.

Died in Production.

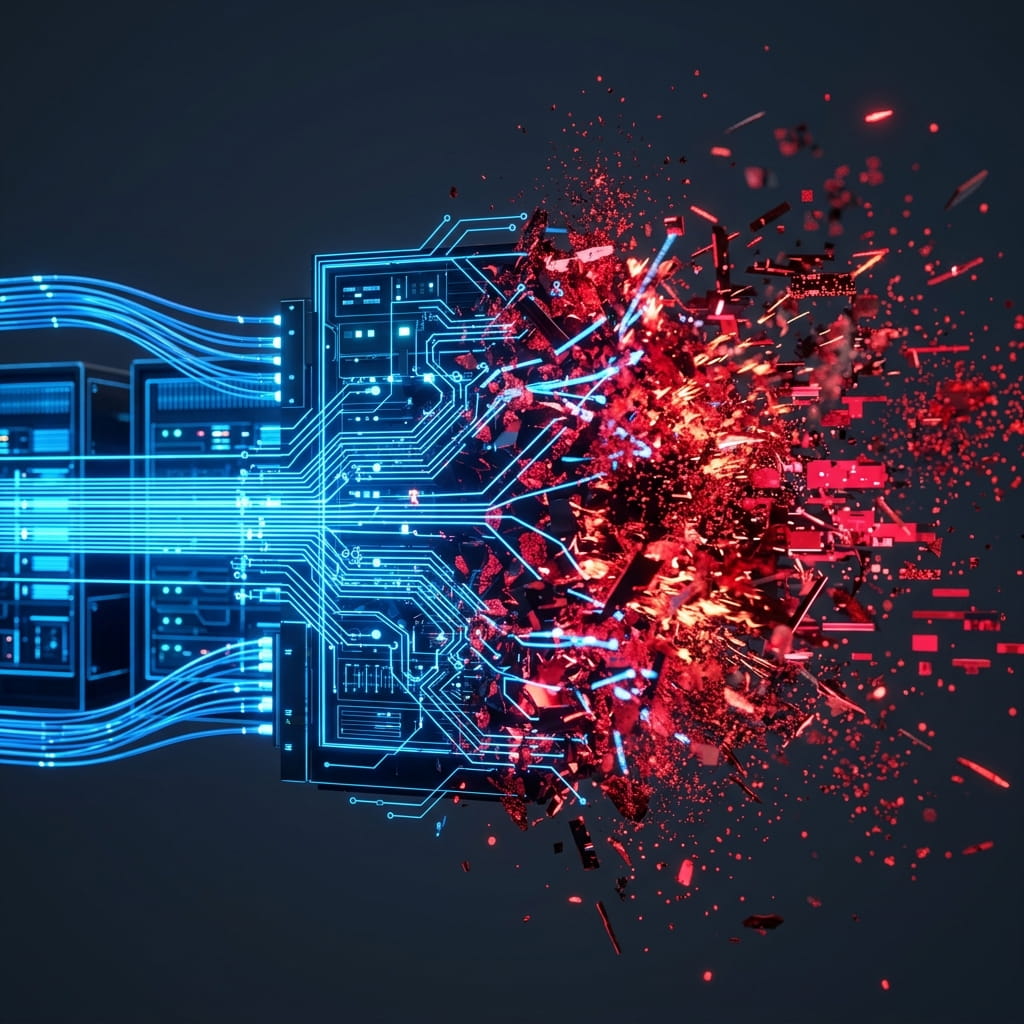

Your MVP survived the investor reviews, but the moment real users arrived, the "happy path" shattered. We step into the chaos, reverse-engineer the bottlenecks, and stabilize the system before user trust evaporates.

Request Emergency Triage